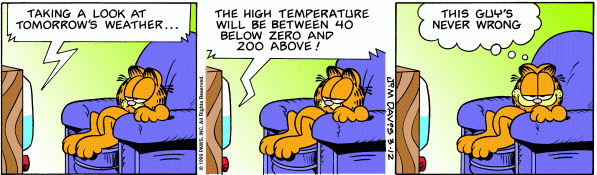

In research, the value reflecting ‘no effect’ is usually 0 or 1.0. So, like traditional significance testing, if 0 or 1.0 is inside the confidence interval (CI), there is no significant difference between the treatments. If 0 or 1.0 is outside the CI, there is a significant difference between the treatments. But CIs tell us more than ‘statistical significance.’ They give us an idea of the smallest and largest effects that are likely given the observed data, and they convey some idea of precision. Narrow CIs usually arise in large studies that have reasonable ‘power’ to detect an effect, thus the estimates of true effect encompassed by them are quite precise. On the other hand, wide CIs usually arise in small studies that have low power to detect an effect, thus the estimates of true effect encompassed by them are quite imprecise and provide limited information (as in the Garfield cartoon).

Made-up results: “The difference in duration of postoperative analgesia following TKA was longer for patients given ropivacaine injected into the intraarticular wound space than into the extraarticular wound space [6.0 h (95% CI 1.1 – 10.9 h)].” In the sample studied, the difference in duration of postoperative analgesia was estimated as 6 hours (in favor of intraarticular). If this effect was true, we would be 95% confident that the CI calculated from this sample (1 to 11 hours) contains it. Some readers may feel that the difference of 6 hours is of minimal importance to their patients; furthermore, the lower limit of 1 hour may reinforce their conclusion that the intraarticular approach is of little clinical interest. Others may feel that the CI is somewhat wide. They may worry about the study’s precision and may question the accuracy of the estimated 6-hour difference derived from the sample studied. But this is “all-good” because CIs provide estimates of effect and precision that foster this kind of thinking among researchers and clinicians; p-values cannot.

Next month, statistics in small doses will recap the importance of good data collection and management, particularly those statistical “hot spots” that can wreak havoc on statistical analysis and interpretation.